SHORT NEWS

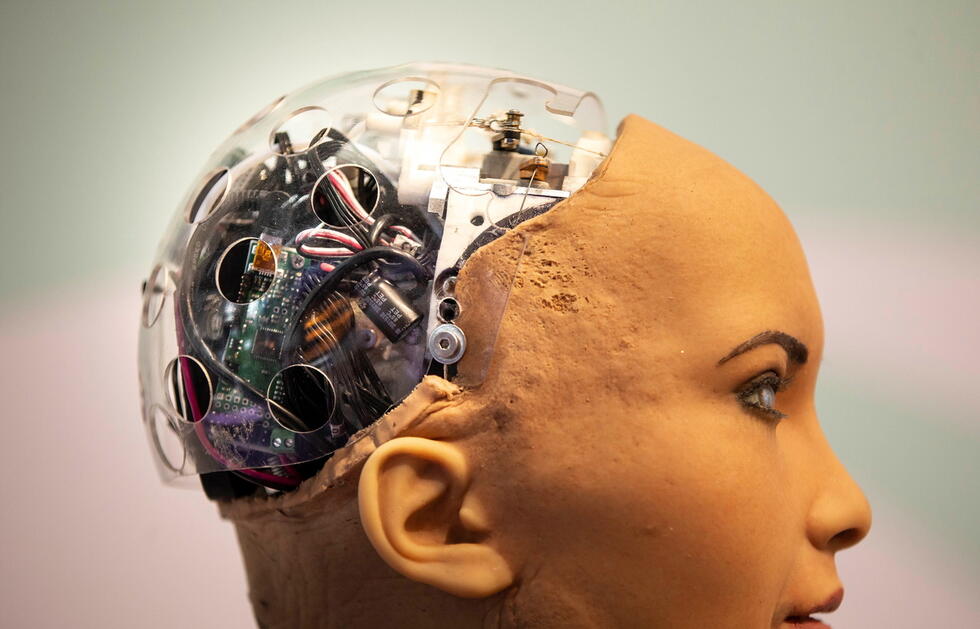

The world is divided over ethical artificial intelligence

Artificial intelligence is making huge strides. At best, its use should not harm anyone. But how can this be guaranteed? The answers to this question from governments, companies, and organizations are very different, as researchers from Zurich have illustrated.

Artificial intelligence (AI) arouses many fears: that adaptive algorithms and intelligent automation cost people their jobs, that AI could be used to the detriment of the population or could discriminate against certain groups of people either by learning human prejudices or through using unbalanced data sets as a basis for training.

In response to such fears, governments, organizations, and the private sector have developed ethical guidelines. But as researchers working with Effy Vayena at the Swiss Federal Institute of Technology (ETH) in Zurich report, the ideas about just what the ethically responsible use of AI should look like differ widely.

The research team collected 84 documents setting out ethical principles and guidelines for AI. They range from guidelines from companies that develop or use AI, to reports and discussion papers from expert panels and universities, and to documents from government agencies and international organizations.

No single ubiquitous principle

“These ethical guidelines for AI have sprung up like mushrooms in recent years”, said the study author Anna Jobin from the Swiss Federal Institute of Technology (ETH) in Zurich in an interview with the news agency Keystone-SDA. “Therefore, we wanted to provide an overview of where these guidelines come from, which ethical principles are mentioned, and how strongly these are weighted.”

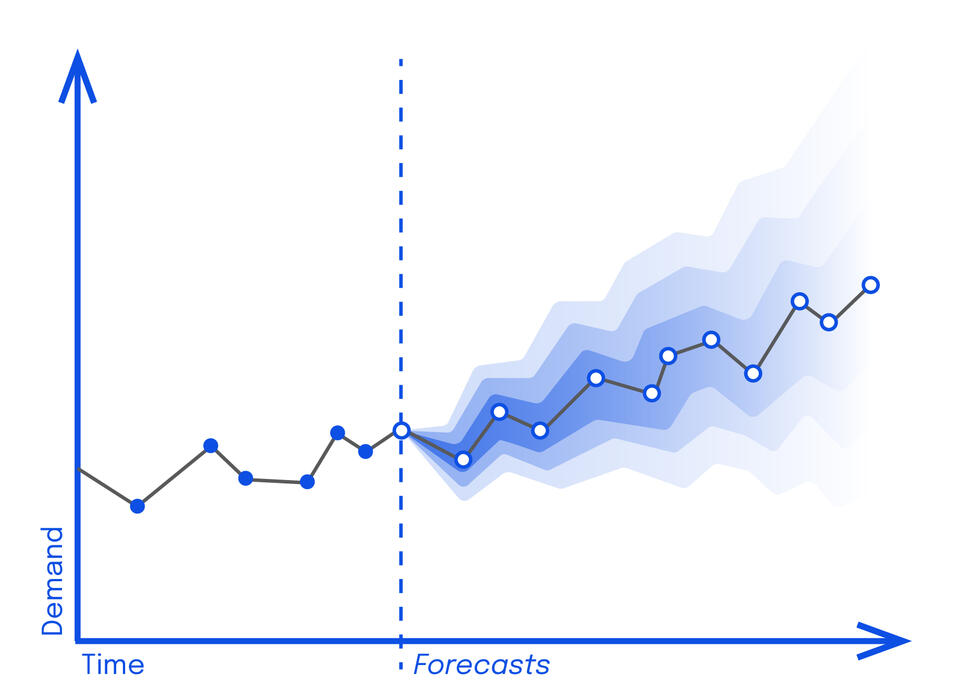

No single ethical basic principle was found in all documents, as the researchers write in the “Nature Machine Intelligence” journal. They were able to identify a total of eleven principles, which however, occurred at very different frequencies. Five cornerstones emerged, which were mentioned in more than half of the guidelines: transparency, justice and fairness, the prevention of harm, responsibility, and data protection. However, the precise meaning of these principles and the ways in which they can be achieved were sometimes very different.

Transparency was the most frequently cited basic principle and appeared in 73 out of 84 documents. This buzzword encompasses efforts to communicate the technology behind AI and its use in a more understandable way, or also to disclose for what purpose and how the data is used, the source code, limits of technology, or even liability.

In particular, the understandable communication and thus also the verifiability by external supervisory institutions (audits) are highlighted in the documents. The main objective of transparency in the use of AI is to prevent misuse or undesirable side effects of the technology. Thus, this basic principle also extends to the other four cornerstones, for example, to prevent bias and discrimination in decisions taken by AI.

Full of contradictions

“It is astonishing how contradictory the documents themselves are in some respects”, Anna Jobin said. For example, one often cited goal is to prevent discrimination by collecting even larger and thus more balanced data sets. “In the same document one suddenly finds the ethical principle of data protection as well as the fact that users should have control over their data. This is difficult to reconcile with the collection of even larger volumes of data.”

While transparency stands at the forefront of ethical guidelines, other principles such as trust, solidarity, and sustainability are rarely mentioned. Anna Jobin: “One can now question whether this is justified. Can AI really be ethical if it does not fulfill the principle of sustainability? Thanks to this overview, we now know where we need to focus even more in the future.”

Overall, the researchers found that the number of documents from the private sector and public institutions was balanced; in other words, that both areas were equally concerned with a responsible approach to AI.

Debate dominated by rich countries

However, it emerged that certain regions of the world have so far apparently hardly participated in the debate on the ethics of AI at all. In particular, Africa, South and Central America, and Central Asia are under-represented.

This reveals a global imbalance: The discussion is dominated by industrially developed countries, which could lead to a lack of local knowledge, cultural pluralism, and global fairness in the debate. “If we want AI to be global, the whole world should have a say”, Anna Jobin stated.

Rather than continually creating new guidelines, the debate should perhaps focus more on the processes of how to arrive at basic ethical principles about AI and how these should be implemented, the researcher explained. “What our evaluation has clearly shown is that the devil is in the detail.” There is no simple answer to the question of the ethically responsible use of AI. “But ethics is a process, not a checklist.”